What is it, and how do we use it?

Guest co-author: Apoorva Shivaram

There are over 1,400 tools used to assist students with their learning in any given school (this is up from around 550 before the pandemic). A lot of choices can be overwhelming, so educators will need to make informed decisions on how to prune the web of tools. Knowing what products are ‘effective’ and how to evaluate their efficacy is critical. How do we, as educators, evaluate the relevance and efficacy of educational products and interventions to ensure that they fit the learning context? This blog post answers this question using an ‘evidence-based framework’. Read on to learn more!

What is the Evidence-based Framework?

Built upon the foundation of three terms – quality, impact, and quantity – the evidence-based framework provides a holistic approach to evaluating research. Think of these concepts as comprising ‘a research house’ where the quality is the foundation of the study, the impact is the influence that the house has on the people who live there, and the quantity is the number of such houses in a neighborhood. In other words, quality refers to the rigor of the research design and the standards of the study, impact addresses the effect of the product on the students, and quantity addresses the number of studies that have been conducted that satisfy the standards for quality and impact level.

What do you mean by quality?

The quality of a study is determined by the rigor of the research design and the standards that it meets. Several factors contribute to study quality, but we would like to highlight two important factors here: first, study design and the inclusion of control and comparison groups to ensure that the findings of the study can be attributed to the intervention; second, sufficiently large sample size to make meaningful conclusions and generalizations about the effectiveness (or lack thereof) of the intervention.

In addition to these two factors, some of the other components that are involved in determining whether a study is of high quality are whether the study measures the fidelity of implementation (how the product should work), whether it uses a standardized assessment, includes the random assignment of participants to control/treatment groups or equates the two groups (so groups are similar at the start of the intervention), and accounts for student characteristics that could determine the effectiveness of the study (i.e., student demographics).

There are two non-profit organizations that review research studies on the basis of quality – Evidence for ESSA and What Works Clearinghouse. These organizations review research, determine whether they meet certain rigorous standards, and summarize the findings with the goal of providing clear and authoritative information about educational tools that improve student success. While these two organizations provide structured, objective reviews, and can be considered to be reliable, there are limitations to their efficacy of these organizations. If you are interested in learning more about the advantages and disadvantages of these organizations, stay tuned for another blog post coming soon!

Okay, now what do you mean by impact?

The term impact addresses the effect of the product on the students or the magnitude of the intervention effect. This is usually measured using a statistic known as the ‘effect size’. This statistic helps evaluate the main effect of the intervention across research studies by standardizing the mean difference between scores and hence, can be comparable. Another important consideration of effect sizes is that they are independent of the sample size used in the study.

There are various measures of effect sizes that could be appropriate for different data structures. For instance, Kirk (1996) stated that there were over 40 different types of effect size measures that researchers could use depending on their data. For instance, there are four effect size measures if the dependent variable (the value we are interested in) is dichotomous (Pace, 2011), three if it is continuous (Huberty, 2002), and many others for multilevel data (Peugh, 2010). However, three major categories of effect sizes are commonly used in studies: the r family to evaluate the correlation between continuous variables, the d family to measure standardized mean differences in a continuous variable across groups of a categorical independent variable, and ratio statistics to calculate the comparative risk of dichotomous outcomes (Rosenthal, 1994; Nakagawa & Cuthill, 2007; Selya et al., 2012). Due to the wide variety of measures used, there is no single value that can be provided as a cut-off or indicative of a good study.

Great, that’s quality and impact. What about quantity?

Now that you are equipped with the tools to build your ‘research house’ with knowledge about quality and impact, the ‘materials’ to build the house comes from many studies. To get a full understanding of how a product works, it is critical to look at many studies over time that has been conducted with different populations. Applying the foundational concepts of quality and impact, we can assess each study that we come across to determine whether it should be considered in our final evaluation of the product. In addition, it is important to look for the sample size, or the number of participants who took part in the study, to determine whether a study is meaningful. Typically, a sample size of over 350 students can be considered to be large and can provide a somewhat generalizable finding.

Together, quantity refers to the replicability of the findings. In other words, when a new sample of participants is tested, do the findings of subsequent studies produce the same results when answering the same research questions? According to an interview with meta-analysis education scholar Dr. Steve Graham, there need to be 6 or more studies conducted with similar findings in order for an effect to be considered properly replicated (Graham, 2022). Within an educational research context, teachers and educators should expect to see studies with similar conditions repeated multiple times in varied contexts before placing any serious weight on the findings.

In the recent past, journalists have described the field of Psychology as having a “replication crisis” where subsequent replication studies have failed to find effects similar to the original study. A 2015 study by Aarts and colleagues replicated 100 studies from three high-ranking psychology journals and put forth the situation of a replication crisis with striking results. Although 97% of original studies had significant results, only 35% of the replicated studies did (Aarts et al., 2015). Therefore, it is important to consider the quantity of studies that have published similar findings, prior to drawing conclusions from just one.

Check out Dr. Rachel Schechter & Nate Hansford’s article about meta-analysis studies and why they are important.

Is there anything else to consider?

Yes! It is important to examine the context in which the study took place as well as the context in which you will be implementing the product – your classroom or school. How do these two align or don’t align? The more overlap there is with the study context, the higher the likelihood that the product will work in the same way as outlined in the research study. In addition, it is also important to notice the characteristics of the study population (for example, students, teachers, and school demographics) as this could also influence the efficacy of the product.

Another factor to be considered is the alignment of resources used in the study and the context to which it is being applied. For instance, if the study implemented a web-based tool by providing each student in the classroom with a computer, is that feasible for the current classroom context? The study duration is yet another important factor. For example, if the study utilized an intervention that lasted 90 minutes for 3 months, but in your current context you only have 30 minutes a day of class time, those two durations of time don’t align.

On a bigger picture level, do the studies that you are evaluating examine student achievement, growth, or something else? If the studies evaluate student achievement, but you are hoping to examine growth (or vice-versa), there is yet another misalignment in the goals.

If any or all of these factors are true, it might indicate that the materials used to build your research house do not directly line up with the ones used to build the research house in the studies you are evaluating. While this is not necessarily a red flag, it is important to keep an eye out for particular cases or instances in which the intervention might or might not be working as the study suggests in order to try to isolate the factors involved within the given context.

Combining Quality, Impact, and Quantity

As highlighted above, it is important to consider all three components while building our research houses: quality, impact, and quantity. When all three components are present and, more specifically, when they are ‘high’ (high-quality, high-impact, and high-quantity), a study can be said to be evidence-based. When one of these three components is ‘low’ or missing altogether, it might result in more ambiguous conclusions. For instance, a study with low quality (rigor of research design) would result in low impact (magnitude of intervention effect), and that, in turn, would affect the quantity (number of studies and replicability of findings) of such studies.

Unfortunately, there are very few types of instruction in education that have high quality, high impact, and a large quantity of research studies. Some types of instruction that do meet these criteria are phonemic awareness, phonics, repeated reading, morphology, vocabulary, comprehension, and explicit instruction (National Reading Panel, 2000). For most other types of instruction in education, one or more of these components are typically missing. This suggests that rather than evaluating these studies on the basis of a binary model of being evidence-based or not, it is necessary to evaluate them on the basis of a continuum.

So, what does this mean? Where does this leave us?

As educators, we are all passionate about striving for learning. However, with limited time and resources, we often must use the tools at our disposal to understand the efficacy of the research that has been published. While there are organizations that evaluate research, these organizations are not without their limitations. As a result, it is important to equip ourselves with the necessary tools that are critical to ensure that we take matters into our own hands and make sure that our students are using evidence-based products that are effective and help them learn.

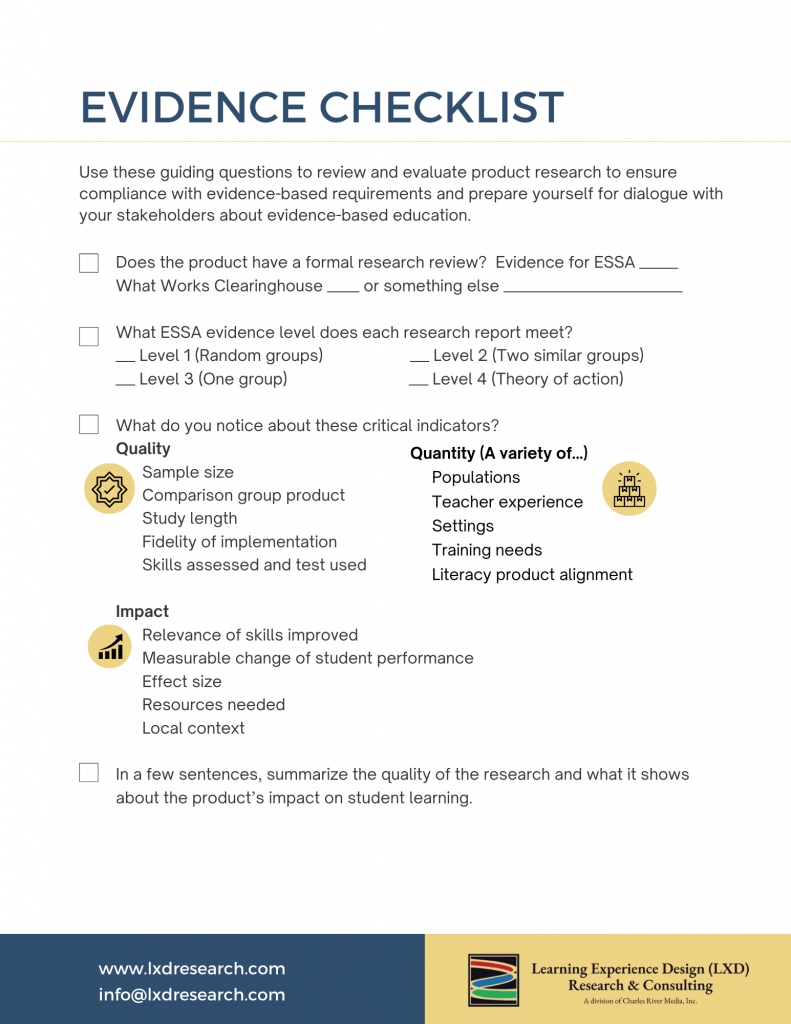

This Evidence Checklist can help review product research for its quality, quantity, and impact!

About LXD Research

LXD Research is an independent evaluation, research, and consulting division within Charles River Media Group, LLC focusing on educational technology. We design rigorous research studies, multifaceted data analytic reporting, and dynamic content to disseminate insights. Visit www.LXDResearch.com.

Recommended citation: Shivaram, A., & Schechter, R. (2023, February 14). Save time with this evidence-based research review framework. LXD Research. https://lxdresearch.com/save-time-with-this-evidence-based-research-review-framework